Learning from failed model predictions

It’s 2024 and a new year to delve into mathematical oncology! This is a great time to pause and reflect on where the research field is heading. Undeniably, a central part of contemporary mathematical oncology entails fitting mathematical models to bio-medical data. The ongoing surge in bio-medical data is exciting and, as a modeller, there is nothing quite like the feeling of seeing your model beautifully predict unseen data. When this happens, you high-five your collaborators, have a good night’s sleep, and prepare to publish! But what happens when your model predictions do not match (all) unseen data? This blog post is about that.

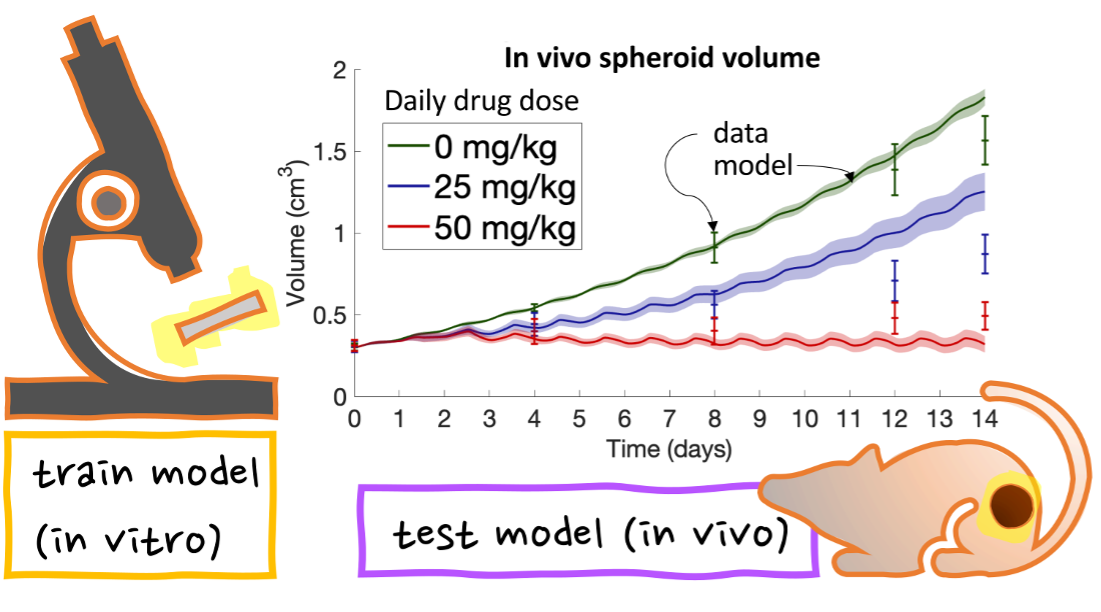

Let’s start at the beginning. A typical workflow for making a predictive mathematical model can be broken into two phases: the training phase and the testing phase. In the training phase, we build our model and train it on seen training data. This phase often includes many iterations of refining and editing the model, but eventually, we arrive at a point where the model and training data agree. Thereafter, we evaluate how well the model predicts unseen test data in the testing phase. In this phase, the work gets really intriguing and we can start debating if our model actually has predictive abilities (or not!).

In one of our previous studies, we trained a model on in vitro data (cells in a dish) and tested it on in vivo data (tumor spheroids in mice). We adjusted the model rules to first describe in vitro cells in the training phase, and then describe tumour spheroids in the testing phase. The model could predict spheroid growth for 12 days when no drugs were given to the mice. Interestingly, when the mice were given drugs, the model could only predict the test data for 8 out of 14 days. What does this mean? Is the model “right” or “wrong”?

I’ll avoid the George Box quote “All models are wrong, but some are useful” and instead this: I am glad that the model can predict tumour dynamics for the first 8 (but not all 14) days. Why? Because it makes sense! Let me elaborate. As tumour spheroids grow in mice, a number of biological processes related to blood vessel formation, immune responses, and drug resistance may be initiated. Our model did not account for any of these processes. Therefore, it is reasonable to expect the model to predict tumour spheroid growth until the effects of the listed processes kick in. I chat more about this with podcast host Parmvir Bahia in the podcast series Biology in Numbers. The series was launched by the Society for Mathematical Biology last year.

Now going into 2024, let’s remember that in science, as in life, we learn from our failures. And sometimes those failures offer great opportunities for us to reflect upon why [our model prediction] failed. By doing so, we can learn and challenge the status quo.

Credits

The title image was generated using DALL-E.© 2026 - The Mathematical Oncology Blog